Most engine comparisons you read are written by people who have not put the engines into a real production pipeline. They benchmark on stock prompts, take five generations from each model, and rank by aesthetic gut feel. That is not how a working studio evaluates infrastructure.

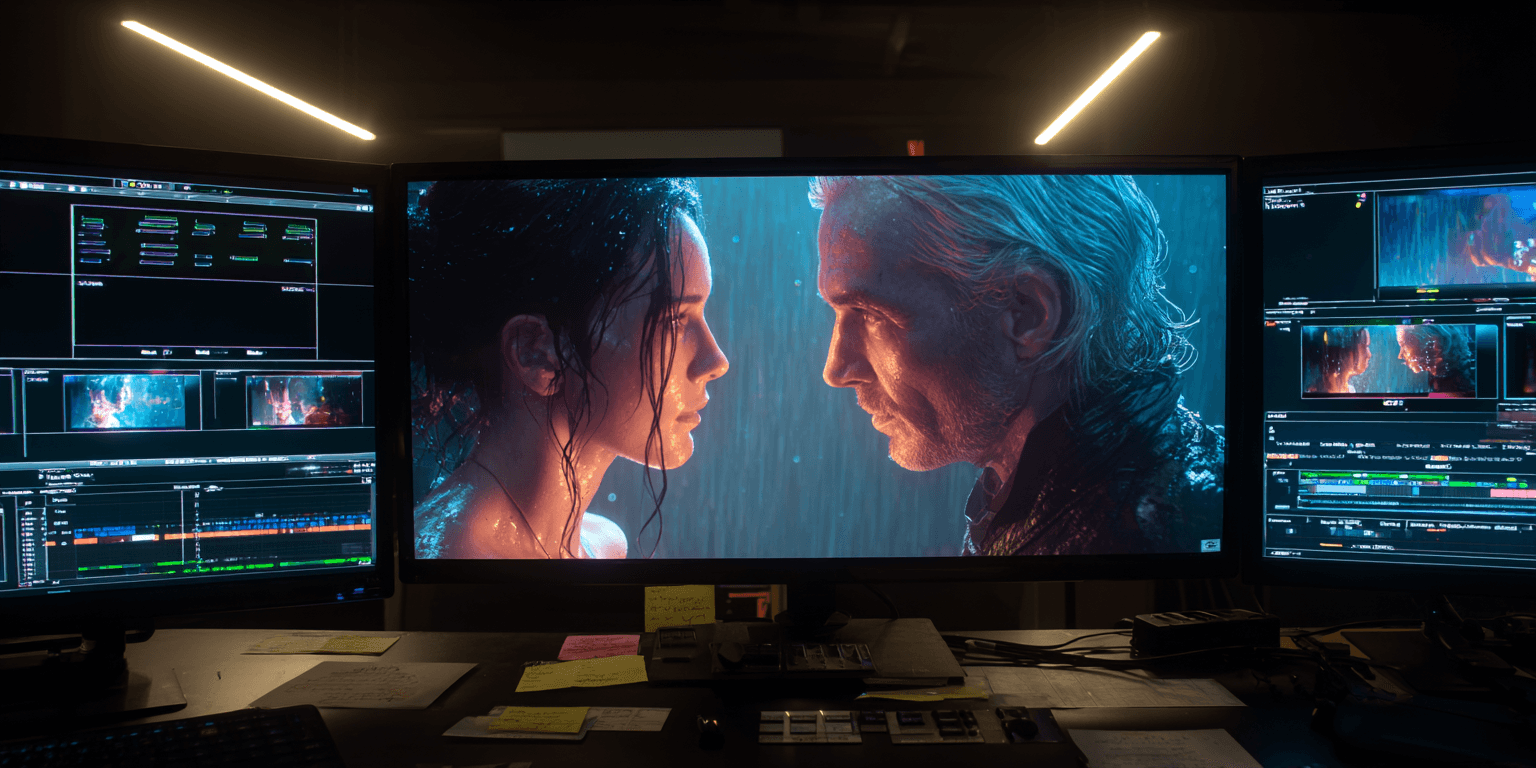

At Komodo X, every model we onboard goes through a structured evaluation against an actual production brief, with a fixed shotlist, locked character references, and a quality bar set by the client we are about to ship to. This piece is what came out of our most recent evaluation cycle, conducted in the last two weeks of April. The brief was a 15-second cinematic beat from one of our active campaigns. The shot was specifically chosen because it stresses every dimension that current models struggle with: a two-character dialogue exchange, an eye-line cross, a lighting transition, and a held final frame that has to carry emotional weight without dialogue.

Here is what we learned. I am writing this for working AI directors, not for a press cycle.

The four engines we tested

Google Veo 4. Released in late April with 4K native output, persistent world-state memory, integrated Foley generation, and a Director's Mode that lets you specify camera angles, focal lengths, and lens types directly in the prompt. The headline feature is the latent diffusion transformer that processes spatial and temporal data simultaneously, which is a meaningful architectural change from Veo 3.

OpenAI Sora 2. The current state of the art on physics simulation and on-set chaos. Where it shines is the unscripted, physical-world realism of motion. Where it struggles is in tightly-blocked cinematic dialogue scenes.

Seedance 2.0. The current Magic Hour benchmark leader for short cinematic shots. We have been running production work on Seedance for six weeks now, and our seedance-director skill has been refined against real shot output.

Runway Gen-4. The character consistency leader. Persistent identity across cuts is its single strongest feature, and it solved a problem that was killing AI film production a year ago.

Test 1: Two-character eye-line cross

Two characters seated across a small table. The brief specifies that character A breaks eye contact at the 3-second mark, and character B holds their gaze for the next 4 seconds. The whole shot lives or dies on whether the audience reads that eye-line shift as deliberate.

Veo 4 nailed this on the third generation. The Director's Mode lets us specify a 50mm lens equivalent, a slow push-in, and an explicit eye-line direction for both characters. The shot held its blocking through the cut, and the eye-line shift read clearly. We paid for this with longer generation times, but the output was usable on first review.

Sora 2 produced beautiful motion but failed the blocking test. Across nine generations, character B's gaze drifted in five of them. Character A's break felt accidental rather than deliberate in three more. Sora 2 is the wrong tool for this kind of disciplined shot. It wants to render a world, not stage a moment.

Seedance 2.0 was the surprise. Six generations, four of which were usable, two of which were better than Veo 4's best. Seedance does not have a Director's Mode in the same explicit sense, but the camera grammar it has internalised is cinematically literate. When we use the seedance-director skill to layer specific cinematographic intent into the prompt, the model executes it cleanly. This was the engine we shipped.

Runway Gen-4 kept the characters consistent across the cut, which was the threshold requirement, but the eye-line discipline was weaker than Seedance and Veo 4. Runway is still our default for projects where character persistence across many shots is the dominant constraint, but for single-shot cinematic discipline, Seedance edged it.

Test 2: Lighting transition mid-shot

The shot calls for a practical light source to brighten over a 4-second window, simulating a door opening offscreen. This stresses the model's grasp of light continuity across a single take.

Veo 4 won this test cleanly. The persistent world-state memory holds the spatial geometry of the lighting source even when the source is off-camera, which is a real architectural advantage. Seedance handled it correctly in three of six generations. Sora 2 was inconsistent. Gen-4 was the weakest, treating the brightening as a tonal grade rather than a physical light source.

Test 3: The held final frame

Two seconds, no dialogue, character B's reaction has to carry the emotional weight of the entire 15 seconds. This is the editorial test. It is also the test no engine handles natively, because no engine knows what the previous 13 seconds were doing.

All four engines failed this test on first generation. All four required us to write the held frame as a separate shot with explicit emotional and physical state direction in the prompt. Once we did that, Seedance and Veo 4 produced usable output. Sora 2 over-acted. Runway under-acted.

Anyone telling you their model handles emotional editorial pacing automatically is selling something.

What we shipped

Seedance 2.0 for the eye-line cross and the held final frame. Veo 4 for the lighting transition. The intercut was finished in our editorial pipeline. Total generation budget across the 15 seconds was forty-seven seeds and roughly eleven hours of compute time, against a schedule that would have required a multi-day shoot in physical production.

The honest summary

If you are running short cinematic dialogue shots with strong directorial intent, Seedance 2.0 is currently the best instrument in the toolkit, and the gap is real. If your scene depends on physical-world lighting and environmental continuity, Veo 4 is the right call. If you are doing multi-shot character continuity across a longer narrative, Runway Gen-4 is still the default. Sora 2 is the strongest engine for unscripted realism, and it is the wrong engine for the kind of disciplined cinematic work most brand campaigns require.

"Pick by problem, not by hype cycle. The campaign-winning studios in 2026 are the ones running three or four engines simultaneously, with a clear decision logic for which engine handles which shot."— Syed Ali Kazim

The studios still arguing about which model is best are losing time they cannot afford to lose.

Syed Ali Kazim is Chief AI Technologist at Komodo X, where he leads the XON pipeline architecture and the studio's model evaluation cycles.